August is the time of year when finalized standardized test scores are released to school districts and shortly thereafter shared publicly. It is a time for celebration, frustration, disappointment, and sometimes even a sense of panic or urgency that leads to questions such as, “What are we going to do? How do we share these with our community?”

Since the increase in large-scale, standardized tests being used for higher-stakes decisions and measures of school effectiveness, there is a sense that the overall purpose of school is to achieve a certain score or better on a standardized test versus students achieving critical learning and confidence that will propel them to success in the future. We treat standardized test scores as an end instead of a measure or as data that informs us about one aspect of students’ achievement. While the overall purpose of school may be debatable and more of a philosophical argument, the best and most productive use of various types of assessment data, including standardized test scores, is clear.

Standardized test scores are an important data point that can tell us something about the overall effectiveness of our curriculum, instruction, and school programming. For which groups of students did our system work? When we see holes in achievement, or gaps in learning for groups of students, we know we need to change something to better meet the needs of those targeted groups. Analyzing standardized test scores over time is even more useful and can help us see patterns of effective classrooms, teaching, and schools. Standardized test scores were never intended to be measures of success for individual students (Marsh & Willis, 2007; Schneider, Egan, Julian, 2013). While these tests do assess standards and the items have been field tested and correlated against other items to ensure a more valid measure of those standards, it is still a snapshot and it is limited in how these test scores can inform students and teachers about learning strengths and next steps.

Classroom assessments – both the informal check-ins and the more formal collection of evidence of proficiency often found in tests and projects – have more impact on helping students get powerful information to achieve at higher levels. Again, the standardized test is an important snapshot of overall effectiveness, especially for district and school leadership, and for teachers as they look across time and groups of students. However, when they are used as an end, problems occur and the school culture shifts further away from an emphasis on learning.

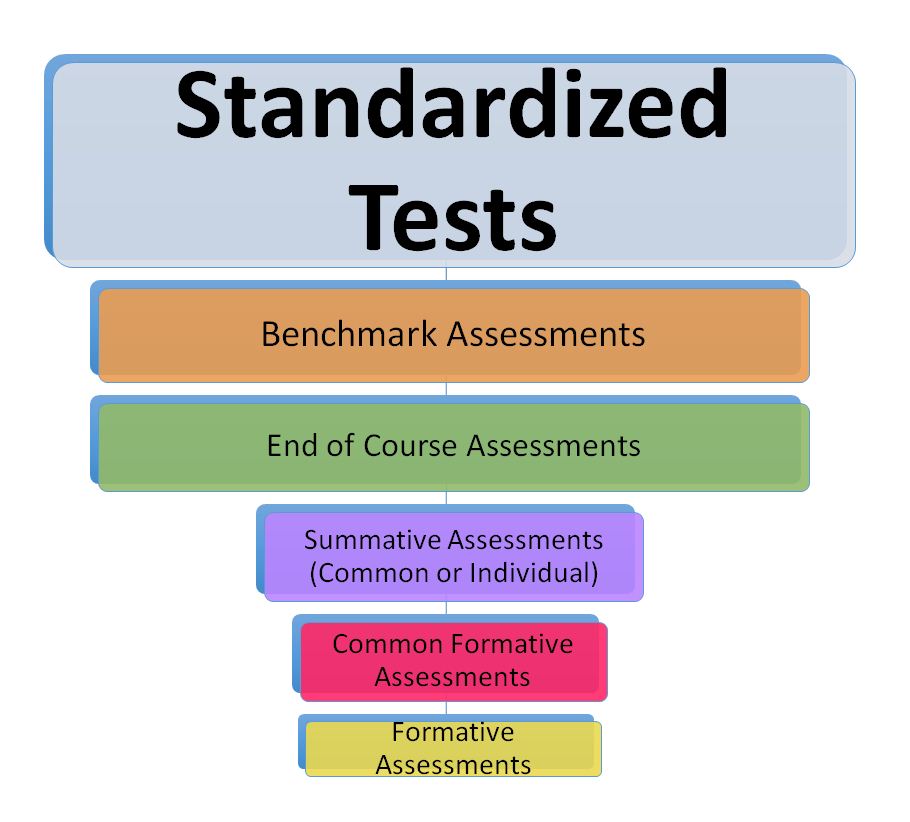

When schools experience low test scores, there may be a tendency to do more practice of standardized test-like items. Items are embedded into the opening of a class or students take many practice exams and are asked to set goals around getting higher test scores. In some cases, incentives are offered where those students who achieve on the test get a pizza party. While students do need some practice so that they are familiar with the organization and method of the standardized assessment, too much causes students to become fatigued, disconnected from learning, and stressed in ways that affect their performance. Schools often see an initial bump in scores when they focus on the method, but those same scores quickly stagnate or drop (Amerlin & Berliner, 2002: Allensworth, Correa, M. & Ponisciak, 2008, Wiliam, 2013). When schools offer incentives for students to achieve on the standardized test, like a pizza party or a field trip, it leaves the responsibility for learning in the lap of students. And, in many cases, students are not holding back. They don’t know what they need to do to learn the skills necessary to achieve a higher score, so they give up. It can look like students don’t care, but in all too many cases it’s more that they don’t know. Standardized tests evaluate teaching more than learning. A top-heavy assessment practice might look like the graphic below:

When the majority of time spent by teachers and students is on standardized tests, our assessment practices are top-heavy and achievement is often shaky. Standardized tests, benchmark assessments (often designed to see how students are progressing towards achievement on a standardized test), and end-of-course assessments are more about evaluating teaching and instruction. When too much time is spent on these types of assessment, teachers don’t get enough information to help them understand why students aren’t achieving. It is the analysis of what is or is not contributing to students’ achievement that leads to better instruction and more learning (Schneider, Egan, Julian, 2013). Standardized assessment may point the way, but assessment closer to the classroom offers more sensitive, meaningful information that enhances learning.

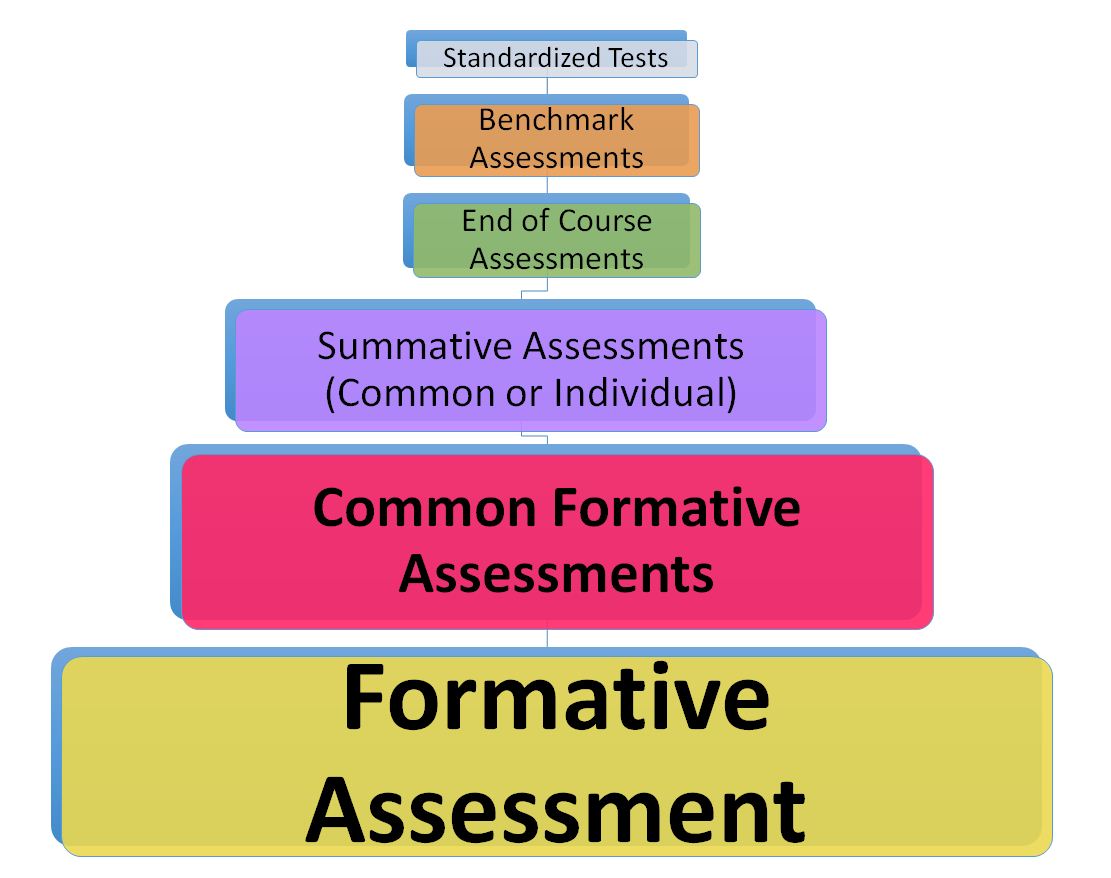

In a more balanced assessment practice, standards and learning targets are central. There is much more assessment used for formative purposes. For example, students use feedback to revise their work. In addition, students understand the learning so well, they can describe criteria of quality and then self-assess and track their own progress. These types of formative activities are happening on essential standards or learning targets. Formative assessment is where the majority of teachers and students spend their time and assessment energy. Collaborative teams work together to identify essential-to-know, hard-to-learn and hard-to-teach learning targets. They collect evidence (common formative assessments) of proficiency by student and essential learning to generate new ways to help students achieve at high levels. Classroom summative assessments are the evidence used to assess mastery of the intended learning. They are often graded. However, these too can be used formatively as a teacher recognizes students have not achieved the essential learning; students re-assess after learning more. The three types of assessment on the bottom of the graph are more influential on learning. The graph below represents a more balanced assessment practice, where teachers and students spend much more of their time and assessment energy on formative, common formative, and summative classroom assessment.

When students see and experience achievement on these essential learnings, they gain confidence. It is this confidence along with achievement on essential learning that affects those standardized test scores. That confidence and sense of achievement helps students persevere. In places where there is an over-emphasis on standardized testing, students often think they have to memorize everything. So, when they get to something they don’t know on that standardized test, they don’t know how to think it through. They guess or skip it. With more confidence, students will develop those reasoning skills to stick with unfamiliar items and come up with a reasonable response.

When assessment is balanced in this way, there is less frustration regarding the feelings of being over-tested or having no time to teach because each type of assessment is used in an intentional and purposeful way focused on learning. To create a balanced assessment practice, consider the following:

- Identify essential standards and learning goals and map them out by unit or time frame.

- Develop formative practices where students are clear about the learning targets and success criteria, act on feedback, revise their work, and track growth on essential standards.

- Develop common formative assessments so that collaborative groups of teachers can identify individual and groups of students’ learning needs and generate innovative instruction and intervention plans to ensure all students achieve those essential standards.

- Understand what is on the standardized test (review the standardized test blueprints) and identify just a couple times throughout the year to teach students about the test and have them practice.

- Identify the different types of assessment information used in your school and district and sketch out the best use of that data.

- Frame the reason students need to learn something around relevance and success for the future, avoiding the rationale for learning, “You have to know this because it is going to be on the test!”.

Clarity around the best purpose and use of different types of assessments along with intentionally shifting time and energy to focus on information from assessment gives teachers and students more power and more meaningful information to ensure learning. Hattie eloquently stated, “Learning is spontaneous, individualistic, and often earned through effort. It is a timeworn, slow, gradual, fits-and-starts kind of process, which can have a flow of its own, but requires passion, patience, and attention to detail (from the teacher and the student)” (Hattie, 2009, p. 2). When assessment is used intentionally and for the purposes for which it was intended, learning, achievement, and confidence are achieved.

Allensworth, E., Correa, M., & Ponisciak, S. (2008, May). From high school to the future: ACT preparation—Too much, too late. Chicago: Consortium on Chicago School Research at The University of Chicago.

Amrein, A. L., & Berliner, D. C. (2003). The effects of high-stakes testing on student motivation and learning. Educational Leadership, 60(5), 32–38.

Marsh, C. and Willis. G. (2007). Curriculum: Alternative approaches, ongoing issues (4th Edition), Upper Saddle River, NJ: Merrill.

Hattie, J. A. C. (2009). Visible learning: A synthesis of over 800 meta-analyses relating to achievement. Routledge: Cambridge, MA.

Schneider, M.C., Egan, K.L.,& Julian, M.W., 2013. Classroom assessment in the context of high-stakes testing. In J. H. McMillan (Ed.), Handbook of research on classroom assessment (pp. 55-68). Thousand Oaks, CA: SAGE.

Wiliam, D. (2013, June). How do we prepare students for a world we cannot imagine? Speech presented at the Minnetonka Leadership Institute, Minnetonka, Minnesota.

Good morning,

I would like to know if you have protocols/ assessment tests available for my school district, Alief ISD. I have several protocols our assessment team uses and we are hoping to find a provider for the items listed below.

Would it be possible for you to please reach out to me in order to provide you with a list of tests we use?

Thank you,

Judith Espaderos